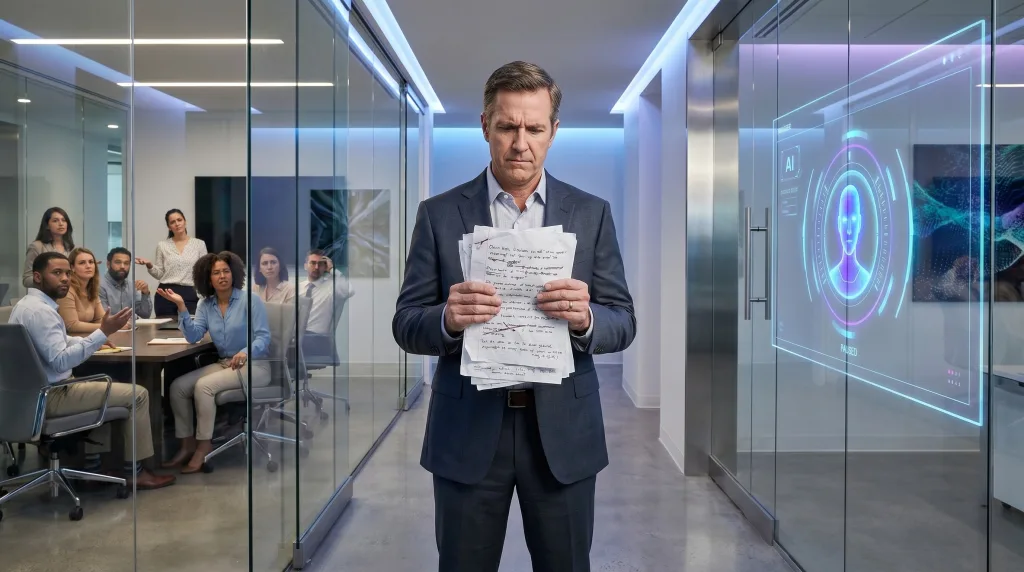

The day a CEO works with an AI agent as a right hand, many people will realize that the era has already changed.

For years, artificial intelligence was sold as a tool for teams. A co-pilot to write, summarize, automate, classify, and accelerate. A productivity layer applied to existing jobs.

Then the hierarchy shifts.

According to The Wall Street Journal, Mark Zuckerberg is developing an AI agent to help him directly in his role as CEO, so he can get answers faster than by moving through multiple layers of the organization. The same report also describes broader internal use at Meta of tools such as “Second Brain,” “My Claw,” and personal agents used to navigate documents, chats, and projects (Wall Street Journal, Reuters).

When AI moves up the org chart

This is a major shift because it moves AI from execution to steering.

Until now, many organizations placed AI in production. Help for marketing. Help for support. Help for developers. Help for HR. In other words, AI served the people who build, answer, code, analyze, or sell.

When the leader starts relying on an agent, AI leaves the operational basement and enters the engine room of power.

It no longer serves only to do things faster.

It serves to see faster.

To arbitrate faster.

To bypass delays.

To short-circuit layers.

To reduce dependence on internal filters.

That is where many people underestimate the scale of the change.

What this signal really means

When a CEO equips himself with a personal AI agent, he sends a quiet message across the company: value no longer lies only in holding information, but in interpreting it, ranking it, challenging it, and turning it into meaning.

That means every layer of the company that partly lived from circulating, filtering, or reformulating information now faces a rapid redefinition.

The subject becomes political immediately.

An organization is never just a rational machine. It is an architecture of power, delays, validations, turf protection, and sometimes information hoarding. If an AI agent allows the CEO to pull answers directly from documents, logs, dashboards, and conversations, then some intermediaries mechanically lose part of their symbolic usefulness.

And when symbolic usefulness weakens, tension rises.

The leader’s AI is not a gadget

At Meta, this move is part of a broader strategy. The company said in late January 2026 that it had 78,865 employees as of December 31, 2025, and announced massive 2026 capital expenditures of $115 billion to $135 billion, largely driven by AI and infrastructure. Meta also stated that it continues to make significant investments in AI initiatives, including infrastructure and specialized technical headcount (Meta Investor Relations, SEC, About Meta).

That context matters.

The CEO’s agent is not a curious executive’s toy.

It is the visible tip of a deeper redesign: the company is reorganizing itself to become natively assisted, and sometimes steered, by AI systems.

From that point on, the question is no longer, “Should we test AI?”

The question becomes, “What level of decision, coordination, and arbitration are we willing to delegate to systems?”

The real shock: human value must be redefined

In my book, chapter 14, I explain that when AI arrives inside an organization, employees immediately ask survival questions: “What does this mean for me?”, “How obsolete have I become?”, and “Is my job at risk?”

That is why this kind of announcement is far from trivial.

When the CEO augments himself, the whole company enters a reassessment zone. Not only execution roles. Also coordination roles, synthesis roles, reporting roles, mediation roles, project facilitation roles, and sometimes even layers of middle management.

If your value rested mostly on moving information upward, consolidating notes, or serving as a required bridge between silos, the signal becomes uncomfortable.

If your value rests on judgment, discernment, arbitrating under uncertainty, building trust, reading weak human signals, giving courage, or owning a difficult responsibility, then your role becomes more strategic, not less.

Short-circuiting is not the same as governing better

There is, however, a trap.

Going faster does not automatically mean deciding better.

AI can reduce informational friction. It can aggregate, retrieve, compare, and summarize. It can save immense time for an executive drowning in layers, meetings, and validations.

But it can also create an illusion of mastery.

A CEO who gets answers faster may believe he understands the organization better, while in reality he mainly understands better what the data is already able to show. A company is never reducible to its documents, dashboards, or internal chats.

Real tensions, unspoken fears, passive resistance, disengagement, ego games, hidden conflicts, and political silence do not always surface neatly inside a system.

So the danger is not only technical.

The danger is that a leader mistakes speed of access for depth of understanding.

What must remain deeply human

The companies that will navigate this well will not be the ones that inject AI everywhere with excitement.

They will be the ones that do three things clearly.

First, redefine roles before panic starts speaking in place of strategy. If nobody knows what is changing, everyone will invent a personal version of the threat.

Second, make explicit what remains human by choice, not by inertia. Final responsibility, courage in ambiguity, ethics in arbitration, conflict handling, trust building, and the reading of weak emotional signals all deserve to be named.

Third, train leaders themselves in the political and human reading of AI. An augmented executive committee without organizational maturity can move very fast in the wrong direction.

We are no longer talking about tools. We are talking about governance.

This moment is fascinating because it reveals the true nature of AI inside companies.

At first, it often enters through convenience.

Then it settles through productivity.

Then it climbs toward decision-making.

And when it reaches the CEO level, it stops being a tooling topic.

It becomes a governance topic.

At that point, many discover that the central issue was never the raw performance of the machine.

The central issue is this: what will we still consider irreplaceably human when leaders themselves start augmenting their work with agents?

That is where the next frontier begins.