AI models just sat an exam. And, symbolically, they turned in a blank page.

The point isn’t to mock the models. The point is to observe what we project onto them.

For years, we’ve confused high scores with understanding. On many “classic” benchmarks, models can score above 90%—but these tests have a structural flaw: a lot of the answers have been public online, and simple pattern matching can look like brilliance.

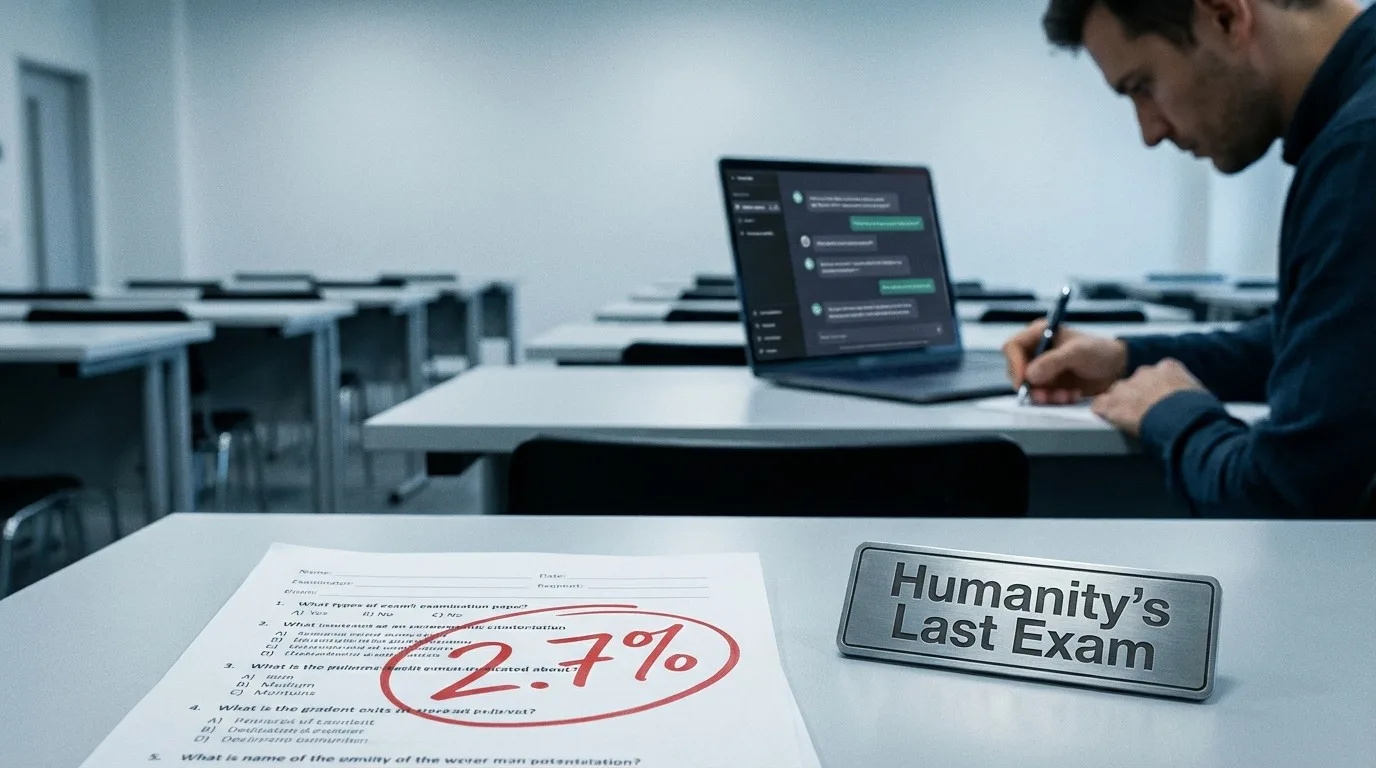

So researchers built an antidote to bluff: Humanity’s Last Exam (HLE), a benchmark designed to measure what saturated tests can’t anymore—performance when memorization and web lookup no longer save you.

An exam designed to resist “internet cheating”

HLE contains 2,500 questions across 100+ fields, written and verified by 1,000+ experts. The goal: closed-ended questions with a single, verifiable answer, yet not easily solvable via quick retrieval. (Center for AI Safety), (Nature)

In other words: a serious attempt to measure academic robustness—not just fluency.

The outcome: top models “collapse”… and stay confident anyway

Early results are striking: even frontier models remain far from expert-level performance. For instance, Gemini 3.1 Pro is reported around 48.4% on HLE in public sources, with other tested models below that. (LiveScience), (Artificial Analysis), (Epoch AI)

The most worrying part isn’t the failure. It’s the certainty.

The associated Nature paper notes that models often provide incorrect answers with high confidence, highlighting severe calibration issues (e.g., large RMS calibration errors reported for most models). (Nature)

Translation: a model can be wrong without signaling doubt.

That’s the enterprise trap: you’re not buying plausible text—you’re buying decision reliability.

The operational lesson: we don’t lack power, we lack clarity

HLE is a mirror. It doesn’t say “AI is useless.” It says:

- Easy benchmarks stopped being informative once they saturate. (Center for AI Safety)

- Confidence does not equal reliability, creating over-delegation risk. (Nature), (Nature Machine Intelligence)

- The costliest mistake isn’t “AI makes errors,” but “the organization treats AI like an expert.”

In my book, chapter 14, I stress a point that becomes decisive here: the first AI adoption project isn’t performance—it’s clarity of use—what you expect, what you verify, and what you refuse to automate.

Assistant vs. expert: the question that changes everything

1) If you use AI as an assistant

You get:

- speed (drafts, summaries, variations, exploration),

- creative support,

- formatting,

- preliminary research (to be validated).

You keep:

- accountability,

- verification,

- arbitration,

- proof.

2) If you treat AI as an expert

You inherit structural risk:

- persuasive hallucinations,

- silent errors,

- decisions taken “in a confident tone.”

HLE doesn’t say “don’t use AI.” It says: assign the right role—and enforce a verification protocol when stakes rise.

Three practical reflexes after HLE

- Demand a “doubt mode”: sometimes the best output is “I don’t know.” (Nature)

- Separate production and validation: AI produces, humans (or a second system) validate.

- Measure calibration, not just accuracy: miscalibrated confidence is a governance risk. (Nature), (Nature Machine Intelligence)

👉 In your organization, do you use AI as an assistant, or are you already treating it like an expert?

References

(Center for AI Safety) = https://agi.safe.ai/

(Nature) = https://www.nature.com/articles/s41586-025-09962-4

(Epoch AI) = https://epoch.ai/benchmarks/hle

(Artificial Analysis) = https://artificialanalysis.ai/evaluations/humanitys-last-exam

(LiveScience) = https://www.livescience.com/technology/artificial-intelligence/acing-this-new-ai-exam-which-its-creators-say-is-the-toughest-in-the-world-might-point-to-the-first-signs-of-agi

(Nature Machine Intelligence) = https://www.nature.com/articles/s42256-024-00976-7