We applaud perfect answers. Then reality shows up.

AI is being sold the way top students used to be sold.

It passes exams.

It dominates benchmarks.

It writes with confidence.

It answers fast.

It produces clean, polished output.

The performance is effective.

For months, the market has been feeding on demonstrations in which models shine on academic, professional, and standardized tests. OpenAI helped cement that narrative when it presented GPT-4 as capable of passing a simulated bar exam around the top 10% of test takers (OpenAI). The implied message was simple: if the machine crushes the exam, it must be closing in on human intelligence itself.

Then ARC-AGI-3 arrives.

And suddenly the whole story starts wobbling.

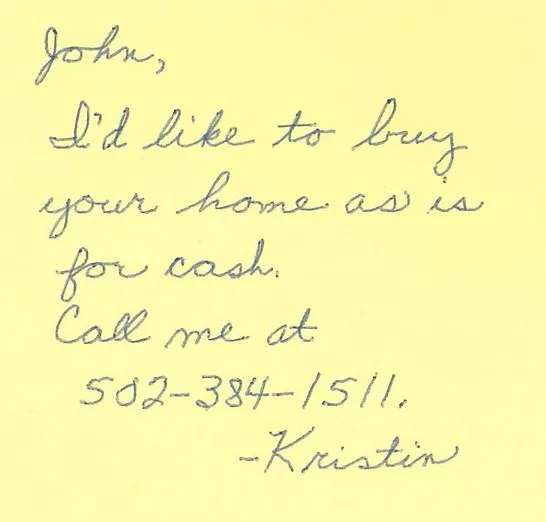

Cilck on the image below or here to play the game that stumps AI.

ARC-AGI-3 does not ask for recall. It asks for understanding.

ARC-AGI-3 is not just one more benchmark added to an already crowded shelf. It is an interactive benchmark built to measure a form of reasoning that is much closer to real adaptation inside unknown environments. It is made of hundreds of handcrafted turn-based game environments with no instructions, no explicit rules, and no stated goal. The agent has to explore, infer what matters, understand what winning means, and carry learning forward as the levels get harder (ARC Prize, ARC Prize, arXiv).

That is where the gap becomes fascinating.

ARC Prize reports humans at 100%, while frontier AI systems remain below 1%. The launch post states it bluntly: “Humans score 100%. Frontier AI scores 0.26%.” The public leaderboard confirms how tiny current scores still are on this benchmark family (ARC Prize, ARC Prize, ARC Prize).

In plain English: the same machine that can produce a brilliant answer on a codified exam can also become nearly useless when forced to explore a new world without explicit guidance.

The business problem is not technical. It is conceptual.

Many teams are still looking at AI through outdated lenses.

They see a polished answer.

They infer understanding.

They see a high score.

They infer judgment.

They see fluency.

They infer strategy.

That is a category error.

Succeeding inside a known structure is not the same thing as learning inside the unknown.

Compressing massive quantities of data is not the same thing as building a model of a new world.

Producing persuasive output is not the same thing as understanding the situation that just emerged.

ARC-AGI-3 restores a healthier hierarchy to the discussion: the intelligence that matters most in unstable environments is not recall, but adaptation. The technical paper explicitly emphasizes exploration, goal inference, internal world-model building, and effective action planning without explicit instructions (arXiv).

The real issue is learning in context

Inside many organizations, people are still rewarded the same way some models are still celebrated: for their ability to reproduce a known frame correctly.

What gets rewarded:

speed,

compliance,

clean language,

mastery of the familiar.

What gets under-rewarded:

exploration,

trial and error,

problem reframing,

learning before concluding.

Yet the next decade will favor fewer champions of repetition and more professionals who can move before the manual arrives, test without total certainty, observe carefully, and then adjust fast.

That is the slap delivered by this benchmark.

ARC-AGI-3 does not say AI is useless.

It says something far more interesting:

AI is extraordinary in some structured settings,

and still visibly fragile in interactive novelty.

That distinction matters.

The most expensive illusion: mistaking verbal ease for general intelligence

The danger is not that AI is improving.

The danger is misreading the nature of that improvement.

When a model speaks with confidence, humans rapidly project meaning, coherence, intention, and sometimes even depth that is not fully there yet. That projection is not new. It happens with every spectacular technology. The difference with generative AI is that the machine speaks our language well enough to fool our social intuition.

It sounds right.

So we assume it is right.

It feels structured.

So we assume it is strategic.

It wins a benchmark.

So we assume it understands the world.

ARC-AGI-3 reminds us that linguistic polish and adaptive reasoning do not necessarily move at the same speed.

For leaders, that reminder is worth a fortune.

What this changes for management

In my book, chapter 14, I explain that AI adoption must be treated as a process innovation, with clear vision, strategy, experimentation, and communication, not as a chaotic rush toward the tool of the month

ARC-AGI-3 strengthens that point.

The company that wins with AI will not be the one with the most demos.

It will be the one that can separate four things clearly:

1. What AI already does extremely well

Summarizing, reformulating, assisting, accelerating, structuring, generating variants, and helping analyze certain types of information at scale.

2. What it still does poorly in novelty

Exploring opaque environments, learning in context from weak signals, inferring unstated goals, and independently building the right representation of a new world.

3. What humans must strengthen

Judgment, decision-making, contextual framing, experimentation, false-positive detection, and fast learning.

4. What organizations must stop confusing

Productivity with understanding.

Fluency with depth.

Benchmarks with reality.

Demos with robustness.

The future belongs to fast learners

So the most useful takeaway is not technological. It is human.

The future does not belong to those who can recite the past most elegantly.

It belongs to those who can learn faster than the environment changes.

That is true for individuals.

That is true for teams.

That is true for organizations.

And it is also true for AI systems themselves.

The beauty of this moment is that the surface polish is finally cracking in the right place.

We are leaving the era where sounding like an expert was enough to be treated like one.

We are entering a period where another quality will matter more:

the ability to understand when the ground no longer follows yesterday’s rules.

AI can pass the bar exam.

Fine.

But when it enters a room with no instructions, inside an environment it does not yet understand, it runs into something many companies have been avoiding for years as well:

real learning is far harder than brilliant answering.

That is why the strongest organizations tomorrow will recruit, train, and promote fewer champions of polish and more champions of learning in motion.

Everyone else will produce impressive demonstrations.

Then hit the wall.

References

(OpenAI) = https://openai.com/index/gpt-4-research/

(OpenAI PDF) = https://cdn.openai.com/papers/gpt-4.pdf

(ARC Prize Launch) = https://arcprize.org/blog/arc-agi-3-launch

(ARC Prize) = https://arcprize.org/arc-agi/3/

(ARC Prize Leaderboard) = https://arcprize.org/leaderboard

(arXiv) = https://arxiv.org/abs/2603.24621

(Revolution in AI) = https://www.revolutioninai.com/2026/03/arc-agi-3-benchmark-ai-scores-openai-spud-anthropic-2026.html

(Scaling01) = https://x.com/scaling01/status/2036853669065306534

(ARC Task LS20) = https://arcprize.org/tasks/ls20